Sensory & Photonic Vision Systems

Dynamic Vision Sensors are a type of spiking silicon retinas in which each pixel autonomously and asynchronously sends out an address event when the light it senses has changed above a given relative threshold. This type of cameras, which are "Frame-Free", do not generate sequences of still frames, as conventional commercial cameras do, but provide a flow of spiking address events that dynamically represent the changing visual scene. They are heavily inspired in biological retinas, which also send continuously nervous spike impulses to the cortex. Biological retinas are continuously vibrating through microsaccades and ocular tremors, thus producing spikes also when there is change of light. DVS cameras provide an almost instantaneous representation (with micro-second delays) of the changing visual reality, with very reduced data flow, reduced power, and data sparsity, thus reducing data processing requirements of subsequent stages. DVS cameras have become of high interest to industry recently with a number of spinoff companies commercializing them (Prophesee, IniVation, Celepixel as well as large traditional companies like Samsung and Sony embracing developments.

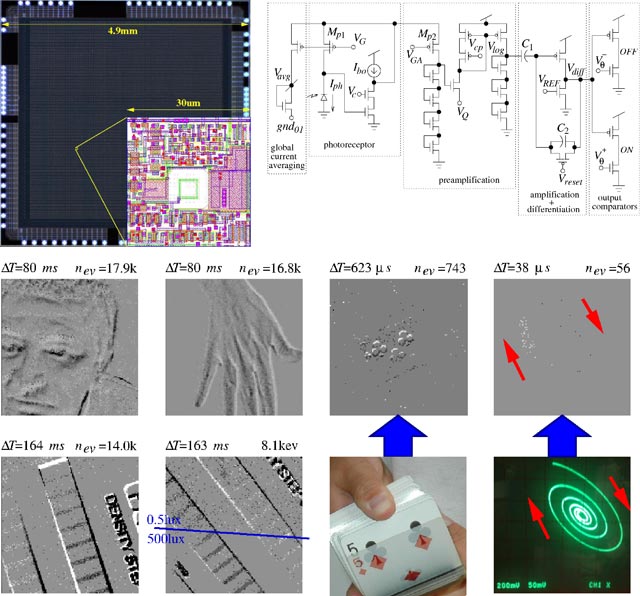

At IMSE there is a specific research line on AER (Address Event Representation) DVS cameras by the Neuromorphic Group, who coordinated the CAVIAR EU project in which this type of sensor was first invented and exploited. Later on they developed their own prototype which at that time had the best contrast sensitivity, power consumption, and circuit compactness, resulting in 4 licensed patents and the participation in French spinoff company Chronocam, now known as Prophesee.

Main recent activities in this line include:

Bernabé Linares Barranco< >

Google Scholar

Teresa Serrano Gotarredona< >

Google Scholar

Luis A. Camuñas Mesa< >

Google Scholar

A. Yousefzadeh, G. Orchard, T. Serrano-Gotarredona and B. Linares-Barranco, "Active Perception with Dynamic Vision Sensors. Minimum Saccades with Optimum Recognition", IEEE Transactions on Biomedical Circuits and Systems, vol. 12, no. 4, pp 927-939, 2018 » doi

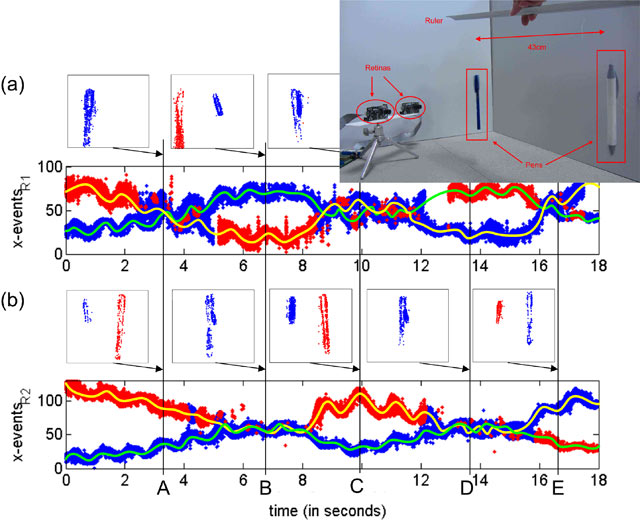

[B] L.A. Camuñas-Mesa, T. Serrano-Gotarredona, S. Ieng, R. Benosman and B. Linares-Barranco, "Event-Driven Stereo Visual Tracking Algorithm to Solve Object Occlusion", IEEE Transactions on Neural Networks and Learning Systems, vol. 29, no. 9, pp 4223-4237, 2017 » doi

T. Serrano-Gotarredona and B. Linares-Barranco, "Poker-DVS and MNIST-DVS. Their History, How They were Made, and Other Details", Frontiers in Neuromorphic Engineering, Frontiers in Neuroscience, vol. 9, article 481, 2015 » doi

[A] T. Serrano-Gotarredona and B. Linares-Barranco, "A 128x128 1.5% Contrast Sensitivity 0.9% FPN 3µs Latency 4mW Asynchronous Frame-Free Dynamic Vision Sensor Using Transimpedance Amplifiers", IEEE Journal of Solid-State Circuits, vol. 48, no. 3, pp 827-838, 2013 » doi

J.A. Leñero-Bardallo, T. Serrano-Gotarredona and B. Linares-Barranco, "A 3.6µs Latency Asynchronous Frame-Free Event-Driven Dynamic-Vision-Sensor", IEEE Journal of Solid-State Circuits, vol. 46, no. 6, pp 1443-1455, 2011 » doi

Patent. T. Finateu, B. Linares-Barranco, C. Posch and T. Serrano-Gotarredona, "Pixel Circuit for Detecting Time-Dependent Visual Data", WO2018073379A1. Priority 20-Oct-2016. European patent, extended to US, Korea, Japan, China.

Patent. T. Finateu, B. Linares-Barranco, C. Posch and T. Serrano-Gotarredona, "Sample and Hold based Temporal Contrast Vision Sensor", WO2017174579A1. Priority: 4-Apr-2016.

Patent. B. Linares-Barranco and T. Serrano-Gotarredona, "Method and Device for Detecting the Temporal Variation of the Light Intensity in a Matrix of Photosensors", WO2014091040A1. Priority: 11-Dec-2012. European patent, extended to US, Korea, Japan, Israel.

Patent. B. Linares-Barranco and T. Serrano-Gotarredona, "Low-Mismatch and Low-Consumption Transimpedance Gain Circuit for Temporally Differentiating Phot-Sensing systems in dynamic vision Sensors", WO2012160230A1. Priority: 26-May-2011. European patent, extended to US, Korea, Japan, China.

Prophesee

Spin-off Company. Metavision for machines

WEBSITE

APROVIS3D: Analog PROcessing Of Bioinspired Vision Sensors For 3D Reconstruction

PI: Teresa Serrano Gotarredona

Funding Body: Min. de Ciencia e Innovación

Apr 2020 - March 2023

COGNET: Event-based cognitive vision system. Extension to audio with sensory fusion

PI: Teresa Serrano Gotarredona

Funding Body: Min. de Ciencia e Innovación

Jan 2016 - Dec 2019

ECOMODE: Event-driven compressive vision for multimodal interaction with mobile devices

PI: Bernabé Linares-Barranco

Funding Body: European Union

Jan 2015 - Dec 2018

WEBSITE

BIOSENSE: Bioinspired event-based system for sensory fusion and neurocortical processing. High-speed low-cost applications in robotics and automotion

PI: Teresa Serrano Gotarredona

Funding Body: Min. de Ciencia e Innovación

Jan 2013 - Dec 2015

NANONEURO: Design of neurocortical architectures for vision applications

PI: Teresa Serrano Gotarredona

Funding Body: Junta de Andalucía

Jul 2011 - Dec 2014